More edge trends in earth observation

Realizations from the progress so far and some speculations

It seems like edge computing for space is common knowledge in the space industry now. The policymakers are aware about it. Satellite operators have plans to incorporate it in design and practice. Tasking to delivery latencies to be solved first. Cost after that. It makes sense. Aravind’s latest rightly says “Edge computing for EO is no longer a technology demonstration. It is becoming part of the invisible infrastructure that is EO.“

Let’s try understanding the scale of the problem.

Realizations on the larger landscape

Due to sensor technology advancements (spectral ie. hx, spatial resolution, sensor type e.g. radars), the data volume has dramatically increased. Modern hyperspectral satellites capture hundreds of wavelength bands at 5m compared to the tens of wavelength bands collected by traditional systems at 30m, providing say 300 times more information. However, groundstation capacities are constrained by the technology (e.g. X band systems giving 100s of Mbps or upto 1 Gbps) with optical communication systems (which are awesome) can give more. Groundstation capacity is increasing at ~13% CAGR but the data generation is growing faster. Basically, the utilization of the onboard sensors is very limited. Even with advanced optical comm and relays, some studies indicate that it can (with some assumptions) increase utilization only from 0.1% to 4%.

In other words:

The rate at which data is being produced in space is far outstripping the capacity to downlink it.

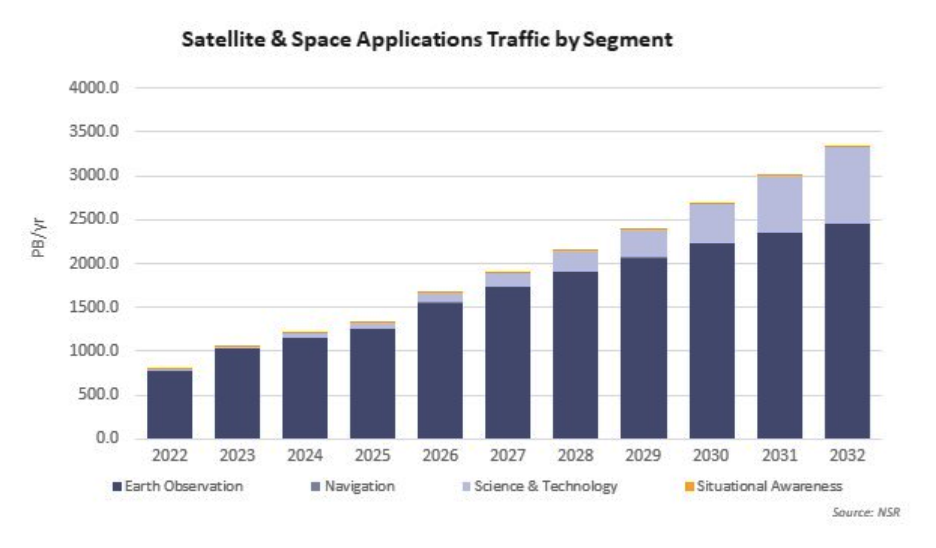

Earth observation data will generate about 2 Exabytes (EB) by 2032, growing at a CAGR of 11%. EO data accounted for about 86% of the cumulative data generated by the Space applications segment. EdgeAI will be a necessary technology to reduce downlink data volume (oftentimes between 50-90%), we ourselves demonstrated 80% reduction. Moreover, the data storage and compute costs on the cloud also will also play a role. Moving to storage of relevant data (enabled by edge), can save 50-90% of the cost according to our case study.

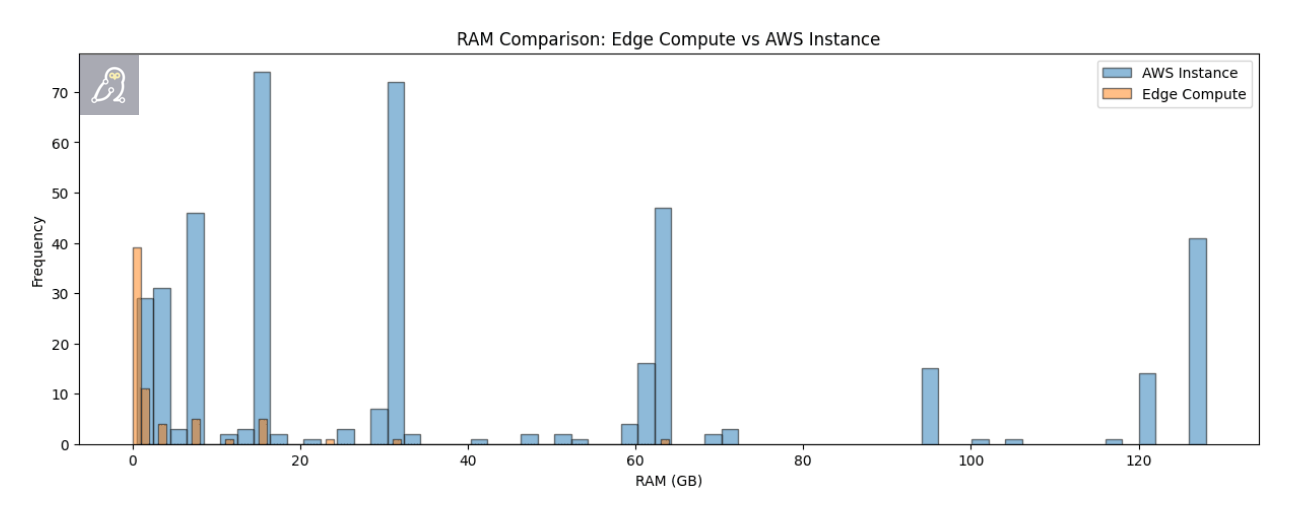

We have CPU, GPU, VPU, FPGA-based machines widely available for spacecraft nowadays as compiled in NASA State-of-the-Art of Small Spacecraft Technology: Small Satellite Avionics report or this 2026 presentation from Kacprzak. Google plans to put TPUs. When you compare the memory (apart from other specs) requirements for such machines, it is clear that we have enough commercial edge devices having capabilities to perform a lot (if not all) of compute work typically done on ground. Multiple premium-grade hardware have long-lifetime guarantees required for say, governmental space missions.

The edge hardware is good enough now that it is possible to do most compute in space earlier reserved for ground.

Considering the plans of development of satellites by different countries, developed countries will be the first ones to realize the advantages of the low latency and intelligent data streaming. Countries having relatively small satellite constellation sizes maybe be more incentivized to have edge technologies due to cost efficiency that edge will bring.

A Speculative Timeline

2026: Edge proves it’s not a gimmick

Given the serious advantage provided by real-time satellite-based intelligence as seen in the war between US and Iran in 2026, the value of edge computing as an essential technology for satellites is felt immediately in US, Europe, China, East Asia and even in countries like India.

This is the year where edge computing in space transitions from demonstrations to selective operational validation for all mature space powers. Early deployments may focus on Earth Observation and ISR (Intelligence, Surveillance, Reconnaissance) with primary objective to reduce downlink volume and latency via onboard filtering and inference.

If all things go per plan, we may also have a winner in XPRIZE Wildfire Space-Based-Detection and Intelligence Finals 2026. Each team will need to identify all active wildfires within just one minute and provide a detailed analysis of each fire’s behavior, such as its perimeter, direction of spread, and intensity, within 10 minutes. The teams will also be challenged to minimize false positives - incorrectly identifying non-fire heat sources like solar panels or hot surfaces as fires- which is crucial for ensuring that resources are deployed effectively in real-life scenarios. The winning teams will prove some thing essential: wide area low latency fire detection by global teams with a real-world use case like Australian wildfires.

2027-2028: Edge becomes part of the architecture

By 2027, edge computing stops being a payload feature and starts becoming a baseline architectural assumption. Key shifts to be seen are integration with inter-satellite links (ISL) and software-defined payloads and emergence of multi-node processing across constellations. Satellites begin acting as cooperative compute nodes. Task distribution and in-orbit workload sharing becomes feasible. Supporting trend: Space network architectures are increasingly designed for networked, autonomous, software-defined systems.

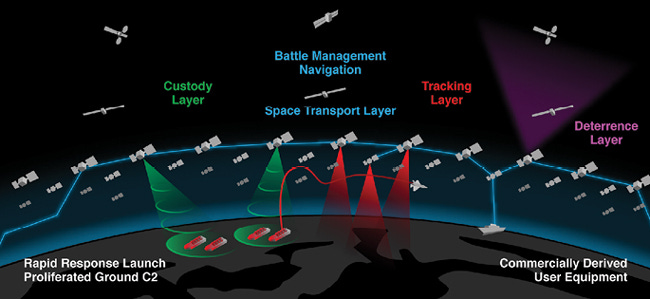

By 2027, the US is expected to complete Tranche 1 of the PWSA architecture by launching 28 satellites in Tracking layer using atleast IR with edge computing to track ‘interesting’ signals as seen in their graphic below.

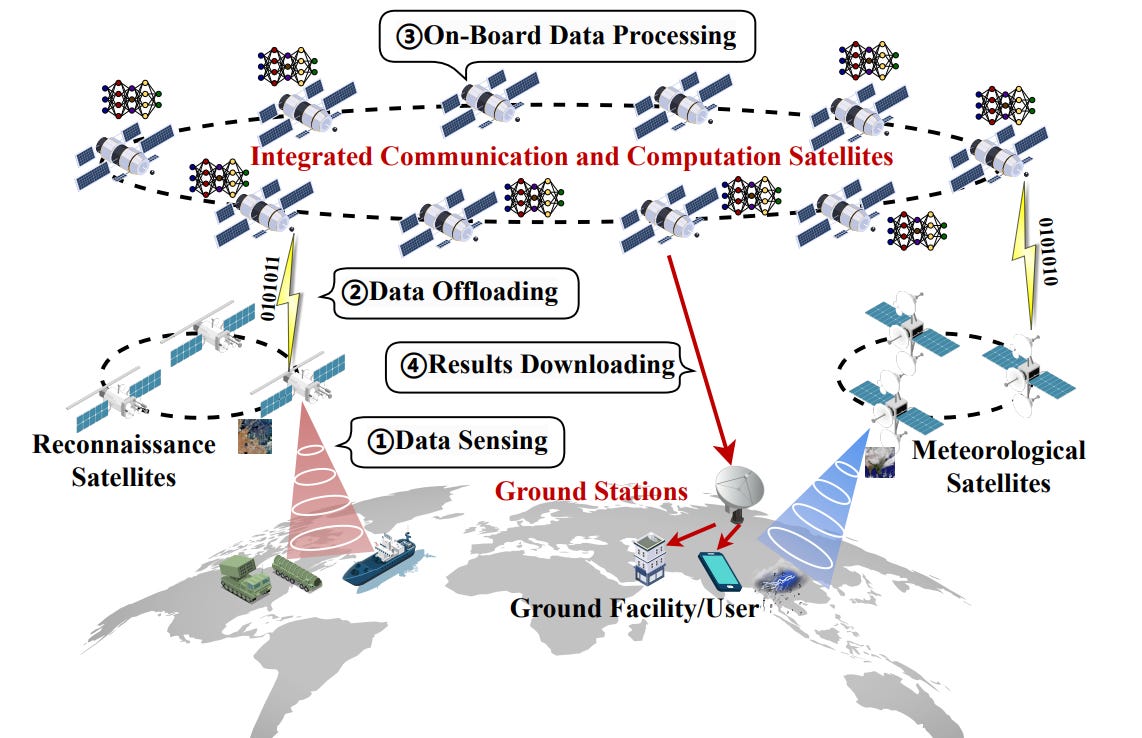

The Chinese, per a recent paper, have interesting architecture propositions of their own. Large AI models (LAMs) on a massive supercomputers, “on-board data processing by leveraging the integrated communication-and-computation capabilities in space computing power networks … enhancing the timeliness, effectiveness, and trustworthiness for remote sensing downstream tasks” and “a microservice-empowered satellite edge LAM inference architecture” make it seem like an engineering-first thought process. A lot of the satellites in the architecture map to the recently launched constellations.

I expect Europe to invest in the Virtual Constellation / Sensorweb technology seriously (including into private constellation providers) as well.

2029-30: Autonomy Phase: Satellites start making decisions

This is where things get slightly uncomfortable for traditional ground control.

Key capabilities emerge like event-driven tasking (e.g. detection → onboard decision → retasking) and reduced human-in-the-loop for: prioritization, routing and scheduling. The demonstrators like dynamic tasking done by Ubotica and NASA are recently showing a lot of promise right now in 2026 itself. One can only imagine that such limited autonomous decision making may become common by 2029-2030. Given the trend of increasing constellation density, the demand for for real-time responsiveness in disaster monitoring and defense scenarios will be met much better.

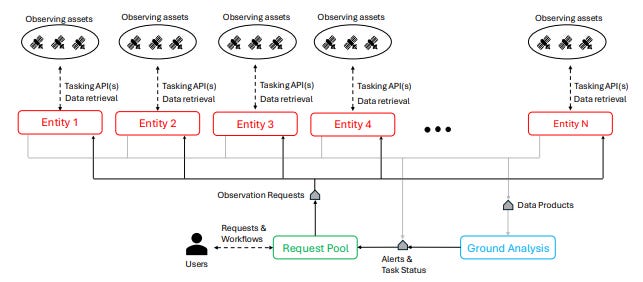

As per a recent paper called Federated Autonomous Operations: A New Paradigm for Large-Scale Observation Systems, satellites could imagined as observing assets belonging to federated entities (e.g. private constellations operators) where federations can communicate with each other but agents from two different federations cannot. As the paper explains:

“For example, consider the workflow “observe volcano daily with TIR (Thermal Infrared) and if thermal emitting, observe as much as possible with TIR until not thermal emitting”. This gets translated to a 3-step workflow: (1) Daily periodicity request to observe with TIR with end condition of thermal emission detection, (2) Continuous request to observe with TIR with end condition of no thermal emission detection, (3) End observation (or alternatively repeat step 1). The goal of the federation is to collectively satisfy all the requests in R during the horizon H while adhering to all constraints of the agents A. These constraints include data volume limits, mechanical restrictions such as slewing, energy usage, and more. These constraints are known by the federation controlling each agent, but not by other federations.”

The scheduling for such a tasks would fall somewhere between decentralized allocation if operators talk to each other or federations bidding for such task requests and then distributing among their observing assets. Constellations begin behaving like loosely coordinated autonomous systems rather than centrally commanded assets.

2031 onwards: Edge dissolves into the space compute economy

Rapid growth trajectory in space-based edge computing and a strong demand for low-latency, real-time analytics services along with adoption across commercial and government users. Market projections indicate sustained expansion driven by AI-enabled onboard processing and autonomous operations.

I expect the emergence and maturity of processing-as-a-service in orbit and mission software licensing models and constellations begin monetizing insights, not imagery. We transition from space infrastructure to space compute economy. By the end of the decade, edge computing in space may stop being discussed as “edge”.

It simply becomes how space systems work. Key characteristics of this system are:

Dedicated missions for extreme environment monitoring already planned with advanced onboard processing (e.g., next-gen missions targeting 2030 timelines)

Constellations operate as: persistent compute fabrics tightly integrated with terrestrial cloud

System-level shifts:

Data flow becomes: selective, prioritized, intent-driven

Satellites participate in: real-time decision loops like dynamic weather forecasting

Industry convergence: Edge + AI + ISL unified architecture and space becomes part of a 3D compute network (space–air–ground). The distinction between: “satellite system”, “data system” and “compute system”…starts to dissolve.